Prompt Playground

The Playground provides an interactive environment for testing prompts against live LLM providers with real-time streaming responses, variable substitution, and multi-modal support.

Overview

Test prompts before production deployment. The Playground automatically detects variables in your templates, presents fillable forms, and streams responses from any configured LLM connection. Save successful tests for later comparison or export results for analysis.

Key Features

Auto-Detected Variables

Variables use curly brace syntax: {variable_name}. The Playground scans your prompt template and generates input fields automatically.

Example:

You are a {role} helping with {task}.

User question: {user_input}

Context: {context}The UI displays four input fields:

role(e.g., “customer service agent”)task(e.g., “technical troubleshooting”)user_input(e.g., “My printer won’t connect”)context(e.g., “Customer has HP LaserJet Pro”)

Variables support multiple types: text, numbers, JSON objects, and file attachments.

Variable names must be alphanumeric with underscores. Nested variables like {user.name} are not supported — use flat keys like {user_name}.

Model Selection

Choose from all configured LLM connections:

| Provider | Models | Features |

|---|---|---|

| OpenAI | GPT-4, GPT-4 Turbo, GPT-3.5 Turbo | Vision, streaming, function calling |

| Anthropic | Claude 3 Opus, Sonnet, Haiku | 200K context, vision, artifacts |

| Qwen | Qwen2.5-72B, Qwen2.5-7B | High-performance Chinese/English |

| Custom | Any OpenAI-compatible API | Self-hosted models |

The model selector displays context window size and capabilities (vision, streaming) as badges.

Parameter Presets

Fine-tune generation behavior with preset configurations:

- Deterministic — Temperature 0, consistent outputs for classification and extraction

- Balanced — Temperature 0.7, general-purpose tasks

- Creative — Temperature 1.2, storytelling and brainstorming

- Diverse — Temperature 0.9 with high penalties, maximum variety

- Custom — Manual control of all parameters

Advanced parameters:

- Temperature (0-2)

- Top-P nucleus sampling (0-1)

- Presence penalty (-2 to 2)

- Frequency penalty (-2 to 2)

- Max tokens

- Stop sequences

Streaming Responses

All models support real-time streaming. Responses appear token-by-token as the LLM generates them, providing immediate feedback during testing.

Performance metrics displayed:

- Time to first token (TTFT)

- Tokens per second (TPS)

- Total duration

- Token count (input + output)

- Estimated cost (based on provider pricing)

Multi-Modal Support

Vision-capable models (GPT-4 Vision, Claude 3) support image attachments:

- Click the image upload button

- Select image files (JPEG, PNG, GIF, WebP)

- Images are automatically resized and base64-encoded

- Add multiple images per prompt

Supported use cases:

- Document analysis (invoices, receipts, contracts)

- Visual QA (describe this image, what’s wrong here?)

- OCR and text extraction

- Diagram interpretation

Large images are automatically resized to 2048px max dimension to reduce API costs. Original aspect ratios are preserved.

Using the Playground

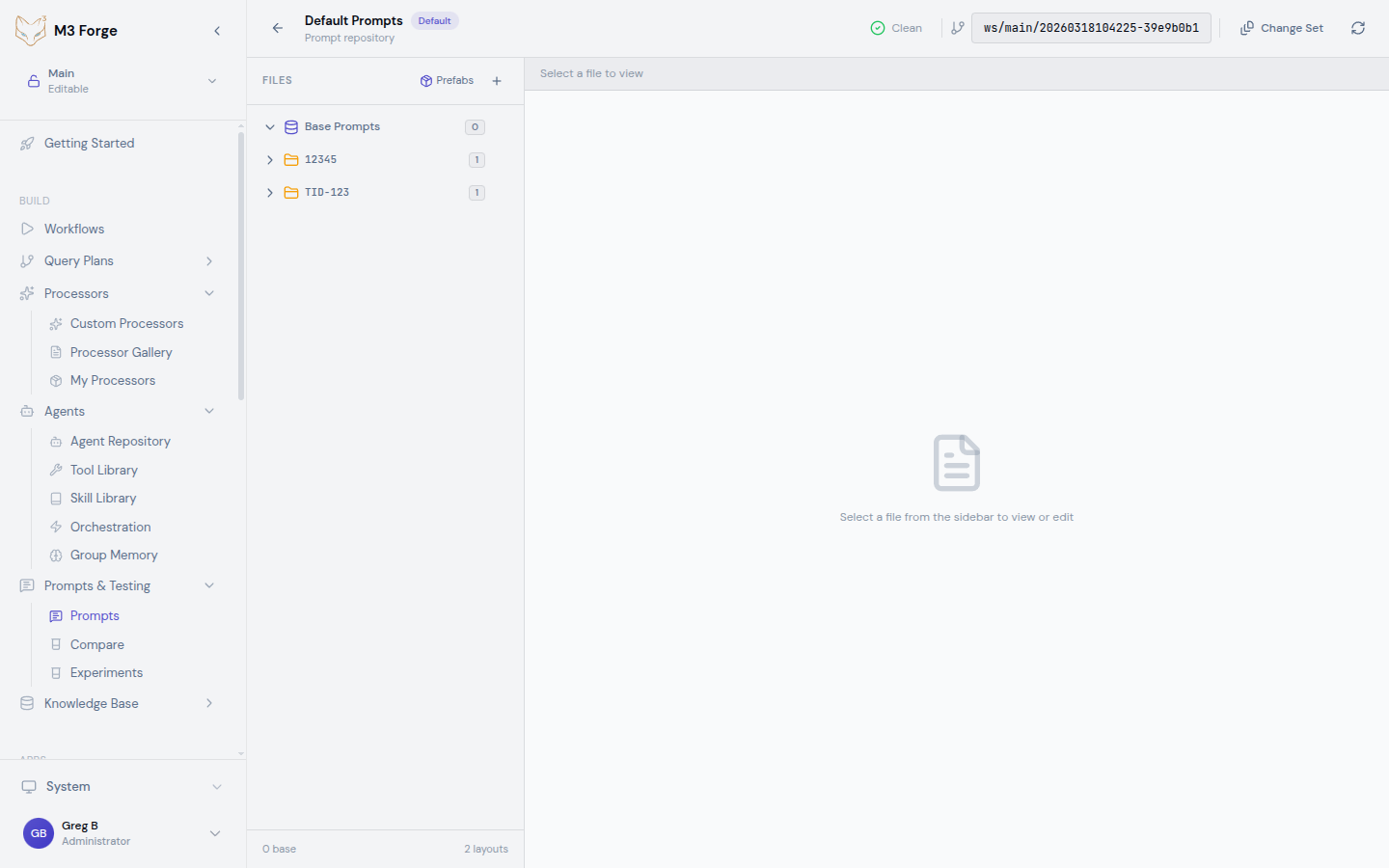

Select a Prompt

Navigate to a prompt repository and click any prompt file. The editor opens in the main panel.

Open Playground

Click “Test in Playground” from the toolbar or use the keyboard shortcut Cmd+Shift+P.

The Playground opens as a side panel showing:

- Prompt template preview

- Auto-detected variable inputs

- Model selector

- Parameter controls

Configure Test

- Choose a model from the dropdown

- Fill variable values in the auto-generated form

- Adjust parameters using presets or custom values

- Attach images if testing vision models (optional)

Run Test

Click “Run” or press Cmd+Enter. The response streams in real-time with metrics displayed at the bottom.

Save or Compare

Successful tests can be:

- Saved to the test history for later reference

- Exported as JSON for analysis

- Compared against other test runs to evaluate quality differences

Advanced Features

Test History

Every test run is saved with metadata:

- Timestamp

- Model and parameters used

- Variable values

- Full response text

- Performance metrics

Access history from the sidebar to replay tests or compare results across different configurations.

JSON Mode

For structured outputs, enable JSON mode in parameter settings. The LLM returns valid JSON that can be parsed directly into application logic.

Example prompt for JSON mode:

Extract customer information as JSON.

Input: "John Smith, john@example.com, lives in Seattle"

Output format:

{

"name": "string",

"email": "string",

"location": "string"

}The response is guaranteed valid JSON: {"name": "John Smith", "email": "john@example.com", "location": "Seattle"}

System Prompts

Separate system and user prompts for better control:

- System prompt — Defines agent behavior (e.g., “You are a helpful assistant”)

- User prompt — Contains the task or question

Both support variables and can be tested independently.

Streaming Control

Pause or cancel streaming responses:

- Pause — Temporarily stop streaming without losing progress

- Cancel — Abort the request and discard partial results

- Resume — Continue from where streaming paused

Best Practices

Variable Naming

Use descriptive, consistent names:

- Good:

{customer_name},{order_id},{support_tier} - Bad:

{x},{temp},{data}

Parameter Selection

Choose presets based on use case:

- Classification/Extraction → Deterministic

- General conversation → Balanced

- Content generation → Creative

- Brainstorming → Diverse

Cost Optimization

Reduce API costs during testing:

- Limit max tokens to reasonable values

- Use smaller models (GPT-3.5 vs GPT-4) for initial iterations

- Compress images before upload

- Monitor cost per test in the metrics panel

Test with the cheapest model first (Claude Haiku, GPT-3.5 Turbo). Upgrade to premium models only after validating prompt structure.

Keyboard Shortcuts

| Action | Shortcut |

|---|---|

| Open Playground | Cmd+Shift+P |

| Run test | Cmd+Enter |

| Clear response | Cmd+K |

| Toggle parameters | Cmd+/ |

| Save result | Cmd+S |

Next Steps

- Compare prompt variants across branches

- Run A/B experiments for optimization

- Deploy tested prompts to production workflows