LLM Connections

Configure multiple LLM providers and models for diverse AI capabilities, cost optimization, and failover redundancy.

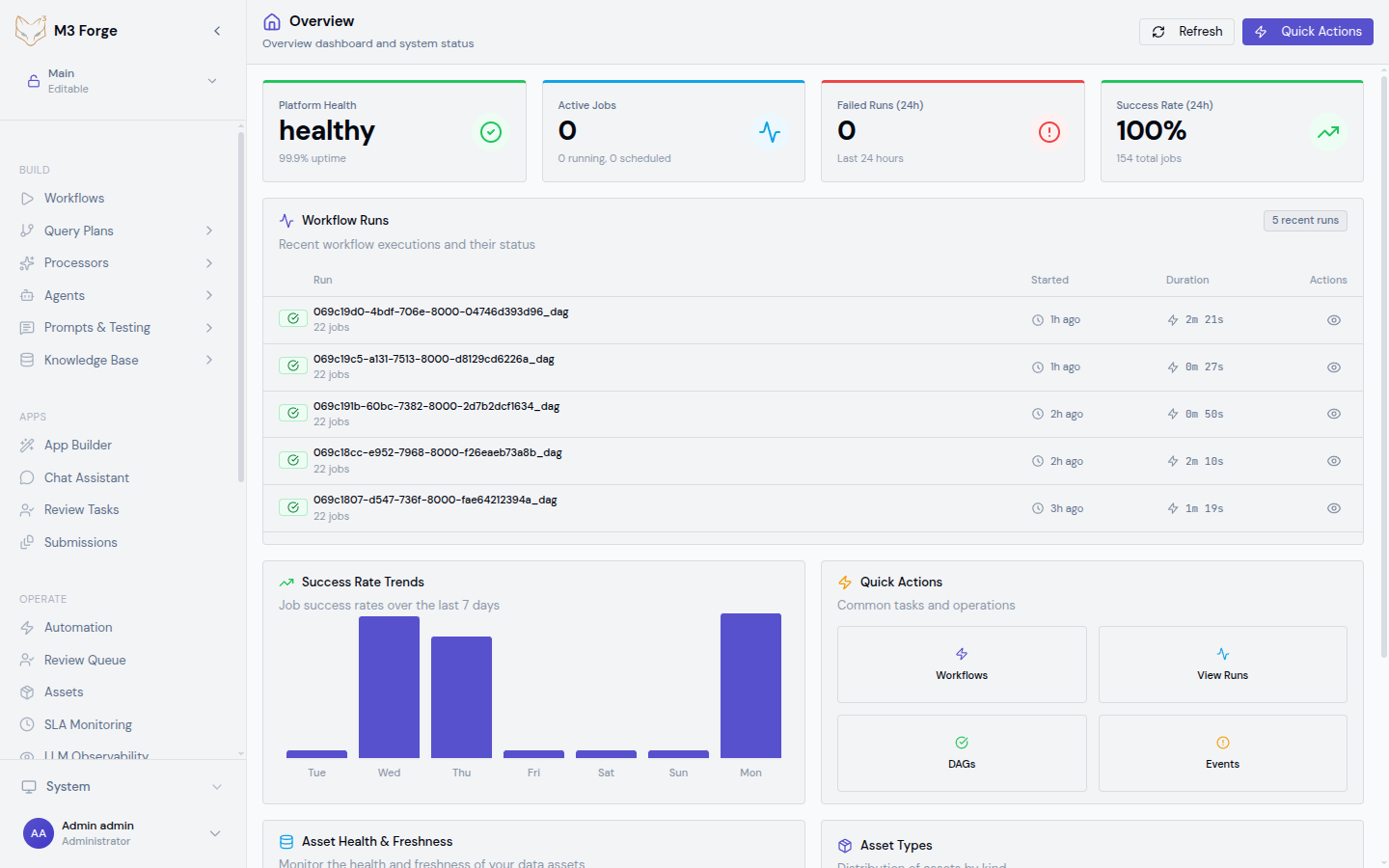

Overview

M3 Forge supports connections to multiple large language model providers, enabling you to:

- Use the best model for each task — GPT-4o for structured extraction, Claude for long documents

- Optimize costs — Route simple tasks to cheaper models, complex tasks to premium models

- Ensure availability — Failover to secondary provider if primary has an outage

- Meet compliance requirements — Use regional endpoints or private deployments

- Experiment with new models — Test cutting-edge capabilities without migration

Each workspace configures its own LLM connections with separate API keys and settings.

Supported Providers

M3 Forge integrates with major LLM providers:

| Provider | Models | Key Features | Use Cases |

|---|---|---|---|

| OpenAI | GPT-4o, GPT-4 Turbo, GPT-4, GPT-3.5 Turbo | Function calling, vision, JSON mode, embeddings | General purpose, structured extraction, code generation |

| Anthropic | Claude 3.5 Sonnet, Claude 3 Opus, Claude 3 Haiku | 200K context, extended thinking, refusal resistance | Long documents, complex reasoning, safety-critical applications |

| Azure OpenAI | GPT-4o, GPT-4, GPT-3.5 | Enterprise SLA, regional deployment, compliance | Enterprise deployments, HIPAA/SOC2 compliance |

| Google Vertex AI | Gemini 1.5 Pro, Gemini 1.5 Flash | Multimodal, 2M context, grounding | Document understanding, video analysis, large context needs |

| AWS Bedrock | Claude, Llama, Titan | AWS-native, data residency, IAM integration | AWS-centric architectures, regulated industries |

| Qwen | Qwen-2.5, Qwen-VL | Multilingual (120+ languages), vision | Non-English content, international deployments |

Adding an LLM Connection

Navigate to LLM Connections

From workspace settings, go to Settings → LLM Connections.

Click Add Connection

Select New LLM Connection and choose the provider type.

Configure Connection

Provide provider-specific details:

OpenAI

OpenAI Configuration:

- API Key — From OpenAI platform (starts with

sk-) - Organization ID — Optional, for organization billing tracking

- Base URL — Leave default unless using a proxy

- Models — Select available models to enable (GPT-4o, GPT-4 Turbo, etc.)

Optional:

- Rate limit — Max requests per minute (default: 10,000)

- Timeout — Request timeout in seconds (default: 60)

Test Connection

Click Test Connection to verify:

- API key is valid

- Network connectivity is working

- Models are accessible

The test makes a simple API call and displays the response.

Save

Connection is saved and immediately available for use in workflows and prompts.

API keys are encrypted at rest using AES-256. They are only decrypted when making API calls to providers.

Managing Connections

Viewing Connections

The LLM Connections page displays all configured providers:

| Provider | Models | Status | Usage (30d) | Actions |

|---|---|---|---|---|

| OpenAI | GPT-4o, GPT-3.5 Turbo | ✓ Active | 145,234 requests | Edit, Test, Disable |

| Anthropic | Claude 3.5 Sonnet | ✓ Active | 23,891 requests | Edit, Test, Disable |

| Azure OpenAI | GPT-4 Turbo | ⚠ Warning | 2,103 requests | Edit, Test, Enable |

Status indicators:

- ✓ Active — Connection working normally

- ⚠ Warning — Recent errors or approaching rate limits

- ✗ Error — Connection failing (invalid key, network issue, quota exceeded)

Editing Connections

To update a connection:

- Click Edit next to the connection

- Modify settings (API key, enabled models, rate limits)

- Click Test Connection to verify changes

- Save

Changes take effect immediately for new workflow runs.

Disabling Connections

To temporarily disable a connection without deleting it:

- Click Disable

- Confirm action

Disabled connections:

- Cannot be used in new workflow runs

- Remain visible in configuration

- Can be re-enabled anytime

Useful for:

- Temporarily over quota with provider

- Testing failover behavior

- Rotating API keys

Deleting Connections

To permanently remove a connection:

- Click Delete

- Confirm deletion

Deleting a connection used by active workflows will cause those workflows to fail. Update workflows to use a different connection first.

Model Selection

Different models have different capabilities and costs. Choose appropriately:

By Task Type

| Task | Recommended Model | Reasoning |

|---|---|---|

| Structured extraction | GPT-4o, Claude 3.5 Sonnet | JSON mode, high accuracy |

| Long document analysis | Claude 3.5 Sonnet, Gemini 1.5 Pro | 200K+ context windows |

| Simple classification | GPT-3.5 Turbo, Claude 3 Haiku | Fast and cost-effective |

| Code generation | GPT-4o, Claude 3.5 Sonnet | Strong coding capabilities |

| Multilingual | Qwen-2.5, Gemini 1.5 Pro | Broad language support |

| Vision tasks | GPT-4o, Qwen-VL, Gemini 1.5 | Image understanding |

By Cost

From most to least expensive (approximate):

- Premium tier — GPT-4o, Claude 3 Opus, Gemini 1.5 Pro

- Mid tier — GPT-4 Turbo, Claude 3.5 Sonnet

- Efficient tier — GPT-3.5 Turbo, Claude 3 Haiku, Gemini 1.5 Flash

Use premium models only when necessary for accuracy or capabilities.

By Context Length

| Model | Context Window | Best For |

|---|---|---|

| Gemini 1.5 Pro | 2M tokens | Entire codebases, book-length documents |

| Claude 3.5 Sonnet | 200K tokens | Long contracts, research papers |

| GPT-4o | 128K tokens | Multi-page documents |

| GPT-3.5 Turbo | 16K tokens | Short documents, chat |

Rate Limiting and Quotas

Prevent runaway costs and comply with provider limits:

Connection-Level Rate Limits

Set per-connection rate limits:

- Requests per minute — Max API calls per minute (default: provider limit)

- Tokens per minute — Max tokens consumed per minute

- Daily budget — Max spend per day in USD

When limits are reached, additional requests are queued or rejected based on configuration.

Usage Monitoring

Monitor consumption in the Usage tab:

Daily Requests:

Chart showing requests per day over last 30 daysCost Breakdown:

| Model | Requests | Input Tokens | Output Tokens | Cost |

|-------|----------|--------------|---------------|------|

| GPT-4o | 12,345 | 1.2M | 456K | $89.23 |

| Claude 3.5 Sonnet | 8,901 | 890K | 234K | $67.12 |Top Workflows by Cost:

| Workflow | Requests | Cost |

|----------|----------|------|

| contract-extraction | 3,456 | $52.31 |

| fraud-detection | 2,103 | $31.87 |Quota Alerts

Set up alerts for:

- Daily spend threshold — Email when spending exceeds $X per day

- Rate limit approaching — Warn at 80% of rate limit

- Error rate spike — Alert when error rate > 5%

Configure alerts in Settings → Notifications.

Failover and Load Balancing

Configure multiple connections for high availability:

Failover Configuration

In workflow node configuration, specify primary and fallback providers:

{

"node_type": "llm",

"config": {

"primary_connection": "openai-production",

"fallback_connections": ["azure-openai", "anthropic"],

"failover_on": ["rate_limit", "timeout", "server_error"]

}

}If primary connection fails, system automatically retries with fallback connections.

Load Balancing

Distribute requests across multiple connections:

{

"node_type": "llm",

"config": {

"connections": ["openai-key-1", "openai-key-2", "openai-key-3"],

"load_balancing": "round_robin"

}

}Strategies:

- Round robin — Rotate through connections evenly

- Least loaded — Use connection with most available quota

- Random — Random selection

Useful for staying under per-key rate limits when making many requests.

Security Best Practices

API Key Management

- Rotate keys quarterly — Update API keys every 3 months

- Use separate keys per environment — Different keys for dev, staging, production

- Limit key permissions — Use provider-level scoping when available

- Never commit keys to Git — Use environment variables or secret management

Least Privilege

- Workspace isolation — Each workspace has its own connections and keys

- Role-based access — Only Admins can configure LLM connections

- Audit logging — All connection changes are logged with user attribution

Compliance

For regulated industries:

- Use regional endpoints — Azure/Bedrock for data residency requirements

- Enable audit logs — Export connection usage for compliance reporting

- Implement approval workflows — Require review before adding new providers

- Monitor data exfiltration — Alert on unusual prompt patterns

See security standards for complete guidance.

Advanced Configuration

Custom Endpoints

For self-hosted or proxy deployments:

- Edit connection

- Override Base URL with custom endpoint

- Optionally add Custom headers for authentication

- Test connection to verify compatibility

Example: OpenAI-compatible endpoint:

Base URL: https://my-llm-proxy.example.com/v1

Custom headers:

Authorization: Bearer my-custom-token

X-Deployment-Region: us-east-1Prompt Caching

For providers supporting prompt caching (Anthropic, some OpenAI deployments):

- Enable Prompt caching in connection settings

- Configure Cache TTL (time to live)

- System automatically caches prompt prefixes to reduce costs

Useful for:

- Large system prompts repeated across requests

- Document context reused in multiple queries

Streaming

Enable streaming responses for real-time user feedback:

- Enable Streaming support in connection settings

- In workflow nodes, set

stream: true - Frontend displays partial responses as they arrive

Improves perceived latency for long-form generation.

Troubleshooting

Connection Test Failing

Cause: Invalid API key, network connectivity issue, or quota exceeded.

Solution:

- Verify API key is correct and has not expired

- Check provider dashboard for quota/billing status

- Test network connectivity to provider endpoint

- Review error message for specific issue

Rate Limit Errors

Cause: Exceeded provider’s rate limit.

Solution:

- Reduce connection-level rate limit to stay under provider limit

- Add failover connections to distribute load

- Implement request queuing in workflows

- Contact provider to increase quota

High Latency

Cause: Network distance to provider, large prompts, or model overload.

Solution:

- Use regional endpoints closer to your deployment

- Optimize prompts to reduce token count

- Switch to faster model (GPT-3.5 instead of GPT-4)

- Enable prompt caching for repeated content

Unexpected Costs

Cause: Inefficient prompts or workflow execution loops.

Solution:

- Review top workflows by cost in usage dashboard

- Optimize prompts to reduce output tokens

- Add budget alerts to catch spikes early

- Implement caching to reduce redundant calls

Model Not Available

Cause: Model not enabled in connection or provider account lacks access.

Solution:

- Check connection settings and enable desired model

- Verify provider account has access (some models require approval)

- Update to latest API version if model is newly released

Next Steps

- Configure workspaces for provider isolation

- Set up users and roles to control who configures connections

- Create workflows using your configured LLM connections

- Monitor usage and costs in the monitoring dashboard